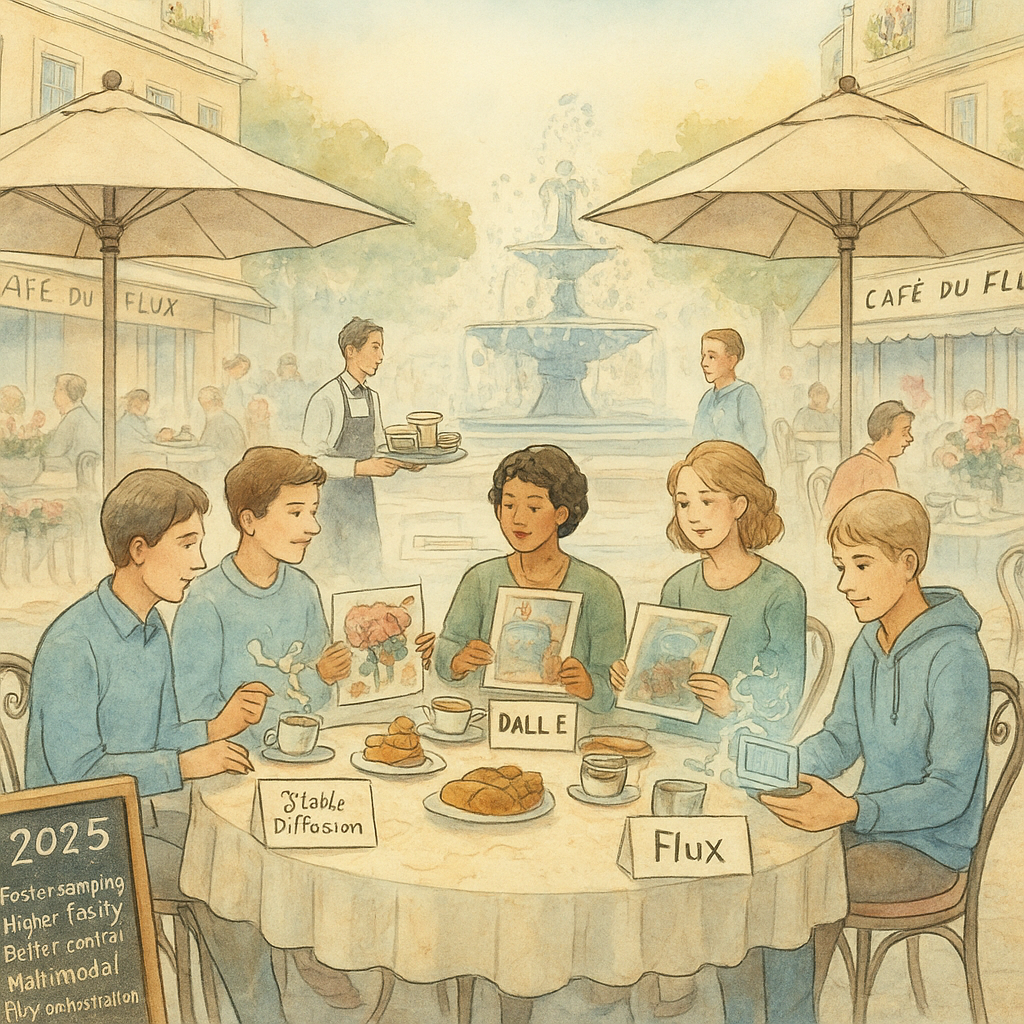

AI Image Generation in 2025: Breakthroughs in Stable Diffusion, DALL-E, Midjourney, and the Rise of Flux

Imagine typing a few words—"a futuristic cityscape at dusk with flying cars"—and watching an AI conjure a breathtaking, photorealistic scene in seconds. That's the magic of text-to-image AI, and in 2025, it's no longer science fiction. From hobbyists tweaking Stable Diffusion models on their laptops to professionals using Midjourney for ad campaigns, image generation tools are democratizing art like never before. But with rapid advancements come new challenges: ethical dilemmas, watermark controversies, and the blurring line between human and machine creativity. Why should you care? Because these technologies are reshaping industries from design to entertainment, and staying ahead means understanding the latest in Stable Diffusion, DALL-E, Midjourney, Flux, and beyond.

As an expert research journalist, I've scoured the web for the freshest insights from November 2025. Drawing from credible sources like The Verge and TechCrunch, this post unpacks the key developments in AI image generation. We'll dive into open-source innovations, commercial heavyweights, enterprise integrations, and the road ahead—all while keeping things accessible for creators and curious minds alike.

Open-Source Revolution: Stable Diffusion's Enduring Dominance and LoRA Magic

At the heart of the open-source AI art movement lies Stable Diffusion, the text-to-image model that's been a game-changer since its debut. By November 2025, Stable Diffusion has evolved far beyond its roots, powering everything from personal AI art experiments to custom image models for niche applications. What makes it stand out? Its flexibility. Unlike closed systems, Stable Diffusion lets users download and modify "checkpoints"—pre-trained model files that serve as snapshots of the AI's learning—and fine-tune them with techniques like LoRA (Low-Rank Adaptation).

LoRA, for the uninitiated, is a lightweight way to adapt a base Stable Diffusion checkpoint without retraining the entire massive model from scratch. Think of it as adding a specialized lens to a camera: you can train a LoRA on just 10-50 images to specialize in, say, cyberpunk aesthetics or realistic portraits, resulting in files as small as 10MB. According to a September 2025 guide from Stable Diffusion Art, LoRA models are "10 to 100 times smaller than full checkpoints," making them ideal for creators with limited hardware. This efficiency has exploded in popularity, with communities like Civitai hosting millions of user-shared LoRAs and checkpoints.

Recent benchmarks highlight Stable Diffusion's staying power. In an August 2025 roundup by AIArty, top models like Realistic Vision V6.0 for photorealism and Juggernaut XL for high-res landscapes topped the charts, often outperforming pricier alternatives. A September Civitai review emphasized its role as a "community model hub," where users collaborate on Stable Diffusion extensions for AI art styles ranging from anime to hyper-detailed fantasy. But it's not without updates: developers have rolled out SDXL 1.0 variants optimized for faster generation on consumer GPUs, addressing complaints about slow rendering times.

For beginners, getting started is straightforward. Download a checkpoint from Hugging Face, install a UI like Automatic1111's web interface, and trigger a LoRA with a simple prompt tag like "

Commercial Powerhouses: DALL-E, Midjourney, and Flux's Fresh Edge

While open-source tools like Stable Diffusion offer customization, commercial platforms deliver polish and speed. OpenAI's DALL-E series continues to lead in precision, with its latest iterations integrated into ChatGPT for seamless text-to-image workflows. By mid-2025, DALL-E 3's successor—powered by the gpt-image-1 model—gained API access for developers, enabling apps from Adobe to Figma to generate and edit images natively. As TechCrunch reported in April 2025, this upgrade excels at rendering text within images and following complex prompts, like creating "Ghibli-style action figures" that went viral and strained OpenAI's servers.

Midjourney, the Discord-based darling of AI art communities, isn't far behind. Its V7 model, released in early April 2025, marked the first major update in nearly a year, focusing on artistic flair and community-driven refinements. TechCrunch highlighted how V7 handles "aesthetically pleasing works" better than ever, though it still faces lawsuits over training data scraped from artists' works without consent. For users, Midjourney's strength lies in its social vibe: prompt a surreal landscape, and the bot generates variations you can upscale or remix in real-time. By November 2025, integrations with tools like Canva have made Midjourney's outputs more accessible for non-artists, blending AI art into everyday design.

Enter Flux, the open-yet-commercial hybrid that's buzzing in late 2025. Developed by Black Forest Labs, Flux 1.1 Pro Ultra—touted in a November 2 Facebook analysis by AI expert Matt Farmer—delivers "very crisp and creative images with high resolution output." It shines in prompt adherence, realism, and text generation, often edging out DALL-E in benchmarks for natural lighting and composition. A Mashable comparison from October 2025 pitted Flux against Midjourney and Google's Imagen 4, praising its balance of speed and quality. Unlike pure open-source like Stable Diffusion, Flux offers pro tiers for enterprises, but its base model is freely tweakable with LoRAs, bridging the gap between hobbyists and pros.

These tools aren't perfect. An Ars Technica piece from March 2025 warned of DALL-E's "potent" potential to provoke, citing risks like deepfakes. Yet, their evolution—from Midjourney's artistry to Flux's realism—shows text-to-image AI maturing into a versatile powerhouse for 2025 creators.

Enterprise Adoption: How Big Tech is Weaving AI Image Generation into Workflows

The real sea change in 2025? AI image generation isn't siloed in art apps anymore—it's infiltrating enterprise tools. Microsoft, long a backer of OpenAI, struck out on its own with MAI-Image-1, its first in-house text-to-image model. Launched in October and rolled out to Bing Image Creator by November 4, as per The Verge, MAI-Image-1 "excels at photorealistic imagery" like dynamic lighting and landscapes. Trained on diverse datasets with input from creative pros, it avoids "generically-stylized outputs" and ranks in the top 10 on LMSYS Arena benchmarks. Integrated into Copilot, it lets users generate images mid-conversation, boosting productivity for marketers and designers.

Google's pushing boundaries too. Its Imagen 4, unveiled at I/O in May 2025 (TechCrunch), renders "fine details" like water droplets and fur with superior quality over Imagen 3. But controversies linger: a March TechCrunch report revealed users exploiting Gemini 2.0 Flash to remove watermarks from copyrighted images, prompting Google to tighten safeguards. Despite this, Imagen powers tools in Vertex AI, making text-to-image accessible for developers building custom image models.

Design platforms are all-in. Figma's October 30 acquisition of Weavy, an AI-powered media generator, supercharges its ecosystem (TechCrunch). Weavy lets users chain models on an infinite canvas—start with a Stable Diffusion-like prompt for an image, then generate video variants with Midjourney-inspired edits. Adjust lighting or angles via text, and boom: professional mockups without starting from zero. Canva followed suit in April, adding AI image generation to its photo editor for background creation that matches layouts automatically.

These integrations signal a shift: AI art is no longer a novelty but a workflow essential. As The Verge noted for Microsoft's MAI-Image-1, it's "the next step" in blending generation with everyday tools, though ethical training data remains a hot-button issue.

Navigating Challenges: Ethics, Access, and the Horizon for AI Art

For all its promise, 2025's image generation boom isn't without hurdles. Copyright battles rage—Midjourney faces suits for scraping art without permission, echoing broader concerns in Stable Diffusion communities. Watermark removal, as seen with Google's tools, raises misuse fears, from fake ads to misinformation. LoRAs amplify this: while they enable personalized checkpoints, bad actors could fine-tune models for deceptive AI art.

Accessibility is improving, but gaps persist. Open-source like Flux and Stable Diffusion run locally for free, but require tech savvy. Commercial options like DALL-E charge per image, pricing out some creators. Safety features, per November 2025 rankings from AlphaCorp.ai, vary: Midjourney scores high on usability but lower on bias mitigation.

Looking ahead, expect multimodal leaps—text-to-image evolving into video and 3D. Black Forest Labs hints at Flux video extensions, while OpenAI teases DALL-E integrations with Sora. As Tom's Guide's August 2025 roundup predicted, hybrid models combining Stable Diffusion's openness with Midjourney's polish will dominate.

In conclusion, 2025 has solidified AI image generation as a creative force multiplier. From LoRA-tweaked Stable Diffusion checkpoints to Flux's pro-grade realism, tools like DALL-E and Midjourney are unlocking imaginations worldwide. Yet, as we embrace this text-to-image renaissance, we must champion ethical AI art: transparent training, robust safeguards, and equitable access. What prompt will you try first? The future of creation is yours to generate—but let's make it responsible.

(Word count: 1428)