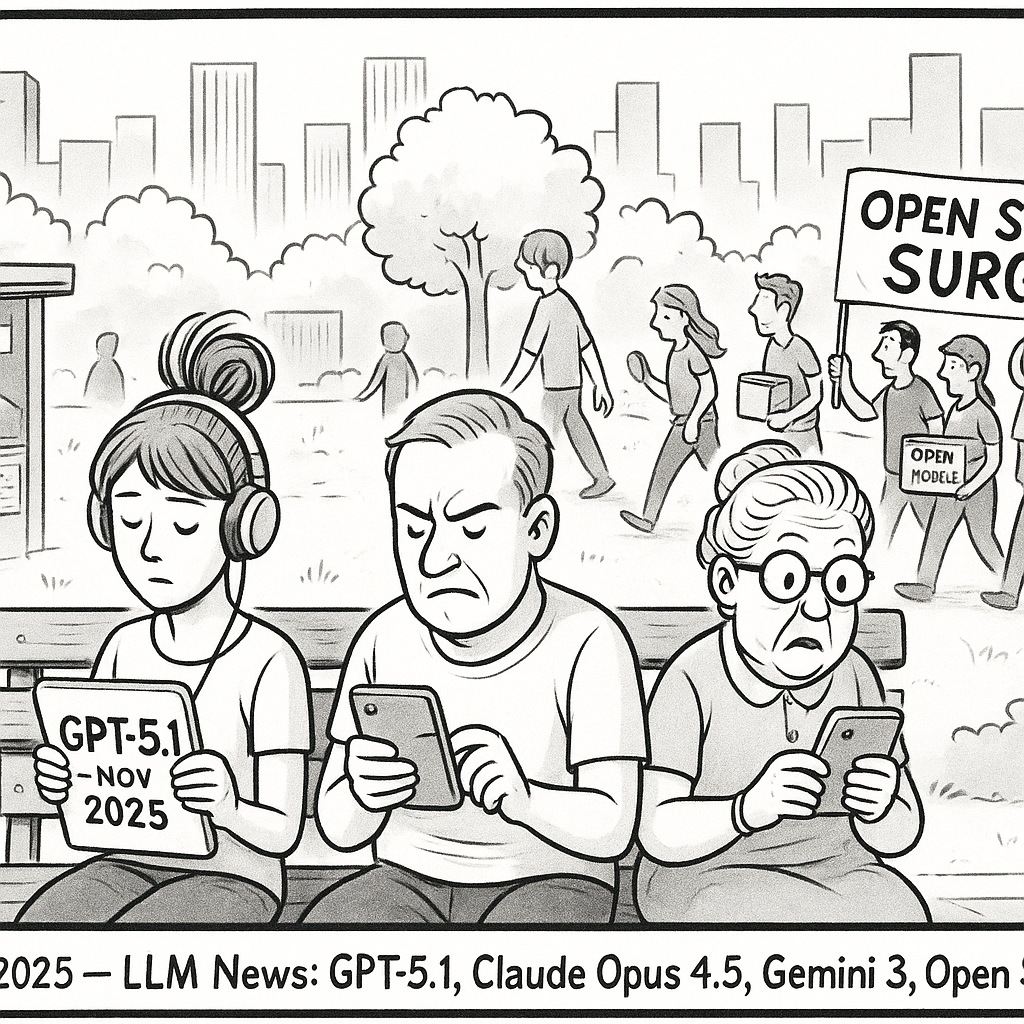

November 2025 LLM News: GPT-5.1, Claude Opus 4.5, Gemini 3, and the Open Source Surge

Imagine waking up to a world where your AI assistant not only chats like a witty friend but also tackles complex coding marathons or analyzes videos on the fly. That's the reality shaping up in November 2025, as major players in the large language model (LLM) arena drop game-changing updates. From OpenAI's conversational upgrade to Anthropic's coding beast, these developments aren't just tech tweaks—they're redefining how we interact with AI in work, creativity, and everyday life. If you're into GPT, Claude, Gemini, or open source LLMs like Llama and Mistral, buckle up: the pace of innovation is accelerating, promising smarter tools for language model training and model fine-tuning alike.

This month alone has seen a flurry of announcements that highlight the competitive edge in the LLM space. With enterprises racing to integrate these models into workflows, and developers fine-tuning them for niche applications, the stakes are high. Let's dive into the highlights, drawing from official releases and expert analyses to unpack what these updates mean for you.

OpenAI's GPT-5.1: Elevating Conversational AI to New Heights

OpenAI kicked off November with a bang on the 12th, rolling out GPT-5.1, an evolution of its flagship large language model that's designed to feel more human and handle reasoning with finesse. This isn't just an incremental update; it's a refined take on the GPT series, splitting into two variants: GPT-5.1 Instant for quick, everyday tasks and GPT-5.1 Thinking for deeper, adaptive problem-solving.

At its core, GPT-5.1 Instant brings a warmer, more playful tone to interactions, making chats feel less robotic and more engaging. According to OpenAI's announcement, it excels in creative writing and brainstorming, where users can customize the model's voice—think sarcastic quips for fun sessions or professional polish for business reports. This model fine-tuning capability allows developers to tailor the LLM to specific tones, reducing the need for extensive language model training from scratch.

The star here, though, is GPT-5.1 Thinking. It dynamically adjusts its "thinking time" based on task complexity: zipping through simple queries while dwelling longer on intricate ones, like debugging code or explaining quantum physics. As reported by HPCwire in their November 25 roundup, this leads to clearer outputs with less jargon, improving instruction-following by up to 20% in benchmarks. For enterprises, this means more reliable AI agents that can manage multi-step workflows without constant human oversight.

But what's the real-world impact? Imagine a marketer using GPT-5.1 to generate personalized email campaigns that adapt in real-time based on user feedback, or a student getting step-by-step guidance on essay outlines. Priced accessibly across tiers—from free to Pro—this update democratizes advanced LLM capabilities. However, OpenAI notes that legacy GPT-5 will linger for a few months, giving users time to transition. With rumors swirling about full GPT-5 integration, this release signals OpenAI's push toward more intuitive, versatile large language models.

Anthropic's Claude Opus 4.5: The Ultimate Tool for Coders and Agents

Just days ago, on November 24, Anthropic stole the spotlight with Claude Opus 4.5, their latest flagship LLM touted as the "best model in the world for coding, agents, and long-horizon tasks." Building on the Claude series' reputation for safety and reliability, this update slashes costs by a third compared to predecessors while boosting speed—turning hours-long tasks into 30-minute sprints.

What sets Claude Opus 4.5 apart is its specialized architecture for tool-use and memory management. It handles massive contexts, like analyzing multiple files or maintaining coherence over extended storytelling sessions, with up to 65% fewer tokens needed for high-accuracy outputs. As detailed in Anthropic's official announcement, the model shines in office workflows, from manipulating spreadsheets to crafting slides, making it a dream for productivity pros. Developers praise its agentic prowess: it can orchestrate tools seamlessly, like querying databases or running simulations, all while staying aligned with ethical guidelines.

HPCwire's analysis echoes this, highlighting how Claude Opus 4.5 excels in large software projects, where traditional LLMs falter on long-term planning. For instance, in coding benchmarks, it achieves higher pass rates on complex algorithms, rivaling human engineers. Availability is broad—rolling out on AWS Bedrock, Google Cloud's Vertex AI, and Microsoft's Foundry—which means teams can start model fine-tuning immediately without proprietary lock-in.

This release underscores Anthropic's focus on "model as collaborator," where the LLM augments human creativity rather than replacing it. In a world increasingly reliant on AI for development, Claude Opus 4.5 could accelerate open source LLM adoption by providing a robust base for fine-tuning on custom datasets. Yet, as Simon Willison noted in his November 24 review, evaluating these beasts remains tricky—benchmarks evolve as fast as the models themselves.

Google's Gemini 3: Multimodal Innovation Meets Agentic Power

Google wasn't sitting idle; on November 18, they unveiled Gemini 3, positioning it as their most intelligent large language model yet. This multimodal powerhouse processes text, images, videos, and code in unified workflows, with "Deep Think" mode cranking up reasoning for tough challenges.

Gemini's strength lies in its agentic capabilities—think AI that doesn't just answer questions but acts on them, like summarizing a video clip while generating related code snippets. According to Google's release notes, it outperforms predecessors in benchmarks for math, reading comprehension, and long-context tasks, thanks to enhanced training on diverse datasets. The November 21 Gemini Drop blog post details UI overhauls, including a "My Stuff" tab for storing AI-generated content, making it user-friendly for creators and devs alike.

For developers, integration is key: Gemini 3 powers Google AI Studio and Vertex AI, enabling seamless model fine-tuning for enterprise apps. HPCwire reports its edge in agentic workflows, where it coordinates tools across modalities—ideal for industries like healthcare (analyzing scans) or e-commerce (visual search). Safety features, including rigorous evaluations, address concerns about hallucinations in multimodal LLMs.

Compared to rivals like GPT or Claude, Gemini 3's ecosystem tie-ins (e.g., Search AI Mode) give it a practical boost. As 9to5Google covered on November 22, premium tiers like Google AI Pro unlock ultra features, blending accessibility with power. This update reinforces Google's bet on holistic AI, where large language models evolve into full-spectrum assistants.

Open Source LLMs: Olmo 3 Leads the Charge for Transparency

Amid proprietary giants, open source LLMs are stealing hearts with accessibility and auditability. The Allen Institute for AI's Olmo 3, launched November 20, exemplifies this: a fully open family (7B and 32B parameters) with base, Instruct, RL-Zero, and Think variants, all under permissive licenses.

Olmo 3 tops open base models in programming, math, and long-context tasks (up to 65K tokens), as per HPCwire's roundup. Its transparency—releasing weights, training data, and code—empowers researchers to inspect and fine-tune without black-box frustrations. Variants like Think enhance reasoning, making it a solid alternative to closed models like Mistral or Llama for custom language model training.

Meta's Llama 4 series, highlighted in a November 6 DataStudios post, continues to influence with efficient variants for scale. Meanwhile, Mistral AI's November 19 partnership with Dassault Systèmes brings sovereign AI to enterprises, emphasizing open yet secure LLMs. These efforts lower barriers, fostering innovation in model fine-tuning for niche domains like coding (e.g., Code Llama derivatives).

As Hugging Face's CEO told TechCrunch on November 18, we're in an "LLM bubble," but open source could burst it by shifting focus to specialized, efficient models. This democratization ensures AI benefits everyone, not just big tech.

Navigating the LLM Bubble: Challenges and Opportunities Ahead

November's releases come amid warnings of an LLM bubble. Hugging Face CEO Clem Delangue, in an Ars Technica interview on November 20, argued that hype around massive LLMs overshadows smaller, specialized ones—echoing TechCrunch's November 18 coverage. While GPT-5.1 and Claude Opus 4.5 dazzle, concerns like energy costs and ethical alignment persist.

Yet, innovations like Olmo 3's introspective tweaks (building on Ars Technica's November 3 report on LLM self-awareness) hint at more reliable futures. Embodiment experiments, per TechCrunch's November 7 piece, show LLMs venturing into robotics, blending digital smarts with physical action.

As we close 2025, these updates signal a maturing LLM ecosystem. With open source LLMs like Llama and Mistral gaining traction, and proprietary giants like GPT, Claude, and Gemini iterating rapidly, the focus shifts to practical integration. Will the bubble pop, or inflate further? One thing's clear: language model training and fine-tuning will drive the next wave of AI transformation. Stay tuned—your next breakthrough could be just a prompt away.

(Word count: 1523)